By Hans Henseler, Professor of Digital Forensics & E-Discovery, University of Leiden Applied Sciences, and Senior Digital Forensic Scientist at the Netherlands Forensic Institute.

Artificial Intelligence (AI) is becoming a gamechanger for a safer society. Its deployment will dramatically change the forensic investigation field. ChatGPT is already accelerating the deployment of AI, according to Hans Henseler. It is not the ideal digital sleuth, but it can certainly become a smart assistant.

AI technology is already being used in facial and speech recognition, for example. From the chat service Encrochat, for instance, 25 million messages were intercepted and subjected to deep learning techniques to filter out criminal activities like kidnappings and liquidations, thereby preventing them[1]. With the availability of ChatGPT in late November, the deployment of AI has gained momentum. ChatGPT is a large language model developed by OpenAI and has gained tremendous attention in recent months for its unique ability to answer questions in a natural dialogue on a wide range of topics it has seen during the learning process.

ChatGPT appears to be a smart student and may also be able to help detectives more efficiently and effectively investigate cases with digital evidence it has never seen before. For example, by translating natural language investigation questions into structured search queries, detectives can find the right evidence faster without having to learn sophisticated search language (see sidebar 1 “Helping write search queries”). ChatGPT can also read through digital traces such as e-mails, instant messages and browser history and summarize them on demand, allowing investigators to quickly see who, what, where and or when something happened (see sidebar 2 “Summarizing Information”). ChatGPT can also analyze links between data, such as repeatedly mentioned e-mail addresses or phone numbers, allowing investigators to interactively quickly identify key individuals and relevant subjects.

Other researchers in the E-Discovery field also believe that ChatGPT and related techniques can play an important role here. In a recent article, “What will E-Discovery Lawyers do after ChatGPT?” in LegalTech News and Law[2], the authors outline a number of experiments in which they ask ChatGPT to construct a complex boolean query based on a fairly simple question. Content-wise, ChatGPT cannot answer queries about information it has not seen before. However, ChatGPT is familiar with the well-known Enron case, which is extensively discussed on the Internet and is also frequently used in E-Discovery education. When asked to provide examples of how Enron violated U.S. Federal Government accounting standards, ChatGPT effortlessly (and, according to the authors, impressively) answers.

The authors suspect that ChatGPT gathered these examples from WikiPedia and countless other online publications that exhaustively analyze Enron’s downfall. Such analyses, of course, do not exist in new investigations. The question is whether ChatGPT can also analyze documents in new investigations. Based on a limited test, ChatGPT given a specific question appears to be able to determine whether or not an email from the Enron set is relevant and, moreover, can explain why that is so. But this does not work for new cases because the underlying GPT-3 model has not read information about them. Such new information can be learned by GPT-3, and OpenAI offers the ability to fine-tune models in the cloud (see sidebar 3 “Making GPT smarter: Prompt design versus fine-tuning”). But that is costly, and lawyers are reluctant to upload their clients’ sensitive documents. That situation is likely to change as alternatives to GPT3 emerge in the coming year that can be trained on proprietary hardware (which is also becoming increasingly powerful).

ChatGPT is a fine-tuned version of GPT-3.5. That fine-tuning is based on Reinforcement Learning from Human Feedback (RLHF). That is, ChatGPT has learned with the help of human reviewers not to use undesirable language (violent, sexist, racist, etc.) in conversations. Also, ChatGPT has learned what useful answers are so that conversations flow smoothly. Employing people to review thousands of prompts is costly and seems to be one of ChatGPT’s key success factors. But for a digital sleuth tasked with helping analyze conversations between criminals, it’s obviously a handicap when an investigator gets the reply “I’m sorry, I cannot generate inappropriate or aggressive language. The conversation you have provided contains profanity and offensive language, and as an AI language model, I am programmed to refrain from engaging in that type of behavior.”

So ChatGPT is not yet the ideal sleuth that can help search through digital evidence, but it is clear that large language models can be invaluable. What detectives need is SleuthGPT. To the prompt “Given that ChatGPT has been trained on conversations on a very broad range of topics, how should a SleuthGPT be trained?”, ChatGPT replies as follows:

“A SleuthGPT model could be trained by fine-tuning the original GPT-3 model on a dataset specifically focused on investigative and detective work. This dataset could include examples of detective work and investigations, including fictional and real-life cases, police reports, court transcripts, and other relevant material. The fine-tuning process would allow the model to learn the language, techniques, and logic used in sleuthing, and to generate more informed and accurate responses when asked questions about investigative processes and techniques. The goal of the fine-tuning would be to create a model that can support and assist human sleuths in their work, by providing relevant information, making connections, and generating hypotheses.”

It’s not that far yet but, as we have come to expect from ChatGPT, the answer sounds very convincing.

[1] https://www.forensischinstituut.nl/actueel/nieuws/2021/05/05/nfi-leert-computers-om-berichten-met-doodsbedreiging-uit-grote-hoeveelheden-data-te-filteren

[2] https://www.law.com/legaltechnews/2023/01/25/what-will-ediscovery-lawyers-do-after-chatgpt/

Helping to formulate search questions

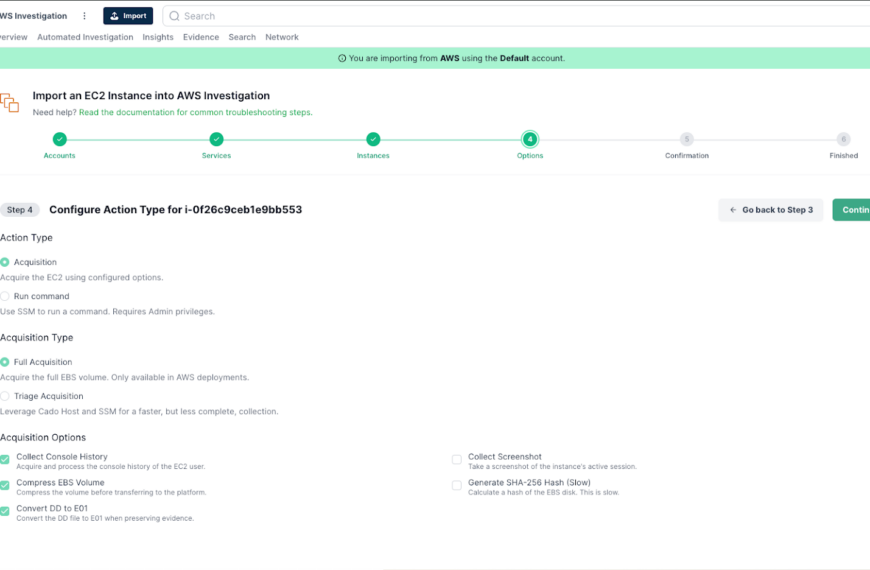

Hansken[3] is an open platform for investigative and security agencies to search and analyze digital traces from seized digital devices such as phones and computers. Hansken has been around for more than 10 years. It was developed by the Netherlands Forensic Institute and is based on Hadoop and Elasticsearch, among others, making it capable of processing and storing petabytes of digital data. Hansken processes digital traces using a trace model. Using the Hansken Query Language (HQL), these traces can be searched in the elasticsearch database at lightning speed. HQL is a powerful language similar to other query languages such as SQL but completely focused on the Hansken trace model. Thanks to ChatGPT’s features, with a few examples and explanations of HQL syntax, it is not difficult to get ChatGPT queries in plain language converted into HQL syntax. For example, the query “Find email traces with attachments sent between July 1 and July 28, 2022 in HQL” is effortlessly translated by ChatGPT as “email.hasAttachment:true email.sentOn>=’2022-07-01′ email.sentOn<=’2022-07-28′”. To do that, ChatGPT has read the Hansken HQL manual and read a number of definitions in the trace model, including the email type.

Summarizing information

Phones and computers contain many traces of communication. Think of instant messages such as WhatsApp, Telegram, SMS and e-mails. At the start of an investigation, very little is known. For example, the name of a suspect and a suspicion of a crime. Using contacts in a phone and laptop, an investigator can gain insight into the suspect’s network, certain events, and locations linked to date and time. Digging out all that information is time-consuming and doesn’t always lead to relevant information. ChatGPT can summarize and organize transcripts of chats. Then ChatGPT can also answer questions. Some brief experiments with the current version of ChatGPT show that the length of such summaries is limited. But it does illustrate the power of a smart assistant that can be queried in natural language about which people are featured in communications and what they are already communicating about. It certainly won’t be proof, but an investigator can use such an assistant to process information more quickly and get on the trail that may ultimately lead to proof.

Making GPT smarter: Prompt design versus fine-tuning

A large language model like GPT is capable of answering questions related to the text used during training. But GPT can also continue to evolve. Through a prompt with a few examples, GPT in many cases intuitively understands what task is meant and can complete new prompts on its own. This is also called few-shot learning and is very useful for getting the model to give an answer with a limited number of examples. But the capacity of this is limited. OpenAI therefore also offers the ability to fine-tune a model with specific data. In doing so, they indicate that the quality of the results is better than with prompt design, that you can train with more data than is possible with prompts, that you end up saving costs because a finetuned model can work with shorter prompts, and that response time improves.

[3] https://www.hansken.org