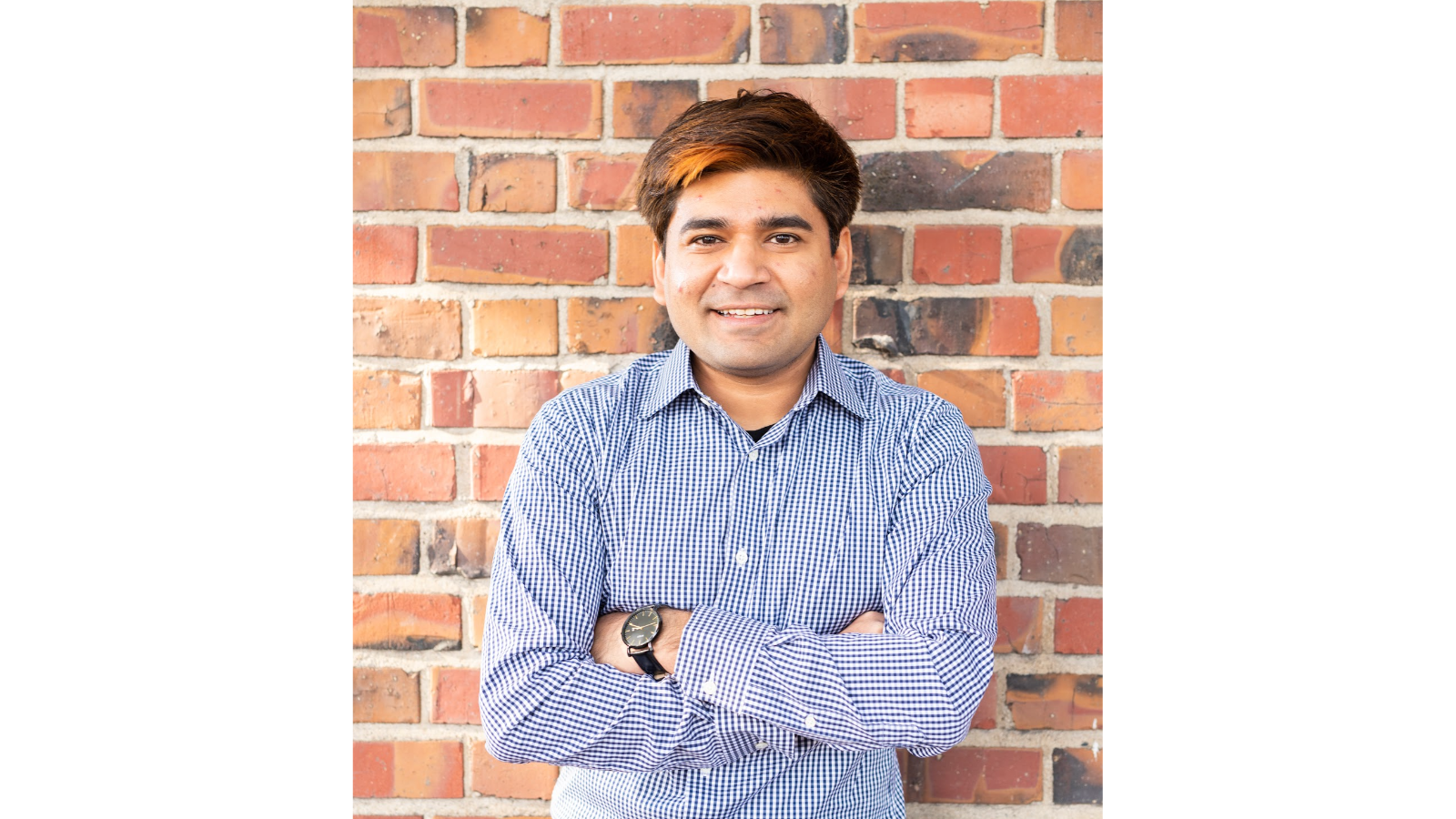

FF: Can you introduce yourself and tell us a little about what you do?

Karan: It is a pleasure to be here. I have been in the security industry for 7+ years now. Currently I am a security engineering manager at Google leading a team of engineers who help reduce risk to Alphabet in their use of GCP.

At Google, I have held technical leadership roles to bolster security of products and services with more than a billion users by building detection and response capabilities.

Previously, I was part of the incident response team at Yahoo who were the ones to respond to the world’s largest data breach. In my past life I was a software engineer at Honeywell where I worked to develop backend software for access control and digital video management service.

I run a blog where I write about security engineering interviewing tips which are provided by Google’s hiring team to prospective candidates.

How do you think digital forensics has evolved in the last decade?

From a technology perspective, we have come a long way from the era of simple client and server model to now a more decentralized and distributed network of interconnected systems. With this change, we have seen forensics evolve from practitioners conducting a simple host and network based analysis to now collecting data across a multitude of sources (smartphones, IoT devices, watches, gaming consoles, open source intelligence, etc).

There is a widespread adoption of cloud technologies which has shifted our traditional collection, analysis and retention methods to the cloud as well. We used to have the generic term ”forensic experts/examiners” earlier, however now we are seeing more specializations emerging in the field because of the increased technological scope and expertise required for each.

Finally, a decade ago big data also wasn’t as popular as it is today and that has opened up a slew of tools for examiners (e.g. ELK stack i.e. Elasticsearch, Logstash, and Kibana, Splunk etc) which have become mainstream for analysis.

From that evolution, what present-day challenges do you see that we’re facing as a digital forensics community?

There are significant challenges with collecting and analyzing evidence from IoT (Internet of things) devices. With the huge influx of IoT devices from hundreds of manufacturers, there is no set standard that applies to all of them when collecting and analyzing evidence.

This is because for each device, manufacturers are free to choose a combination of operating systems, hardware platforms and software on top that powers the device’s functionality. Furthermore, with every software update, functionality can change, pushing practitioners and forensic examiners to always be on their feet, researching and applying them in their roles.

The second big challenge, I feel, is finding and churning through data to find high quality information. Digital forensics is not just a science but also an art where an examiner can use their experience and “gut” check to invalidate certain pieces of evidence within a huge pile of data. It is only by cutting down the noise do we get to the needle in the haystack.

This is another reason why we are now seeing more data analyst roles show up in the industry who can help us narrow down signals for finding and improving data quality.

What are some of the personal lessons you’ve learnt in your career?

Very intriguing question! There are quite a few lessons I learnt throughout my career:

- Be curious, remain humble: It is almost impossible to know everything in not only digital forensics but also any broad field. It is only by asking questions, learning from people around you and accepting when you don’t really know something do you see real, continuous, incremental growth.

- Communicate often, early and clearly: Whether it is talking to your peers, management, friends or family, it helps build relationships at home and fosters rapport at work. In the digital forensics space, written and oral communication is one of the key skills that differentiates a good examiner from a great examiner. When you are investigating incidents, this mantra has proven useful to keep stakeholders informed and for them to make the right decisions for the company.

- Collaboration, not competition: It is easier to get more done, obtain diverse opinions and differing points of view when you get to collaborate with others, especially if they are slightly different than you or your ideas. In digital forensics, when another examiner challenges your point of view or questions your artifacts and methods, it is one of the best exercises to prove validity. This makes a huge difference when the stakes are high (court cases, large breaches etc)

What’s next for digital forensics? Where do you see the future evolving, not just in the technology to be investigated, but in the ways examiners use to investigate it?

Technology wise, I believe it is going to be more complex to correlate data between the myriad of devices and software we have today and the ones to be launched in the future.

We will at some point also shift more towards using web3 and the newer technologies primarily for investigations e.g. tracking transactions on different blockchains and correlating them with transactions in the physical world with credit and debit cards.

From a tooling and data utilization standpoint, tools like log2timeline will play an even critical role in helping examiners across data sources as it will be easy to get lost tracing events.

I also expect to see a lot of conclusions being drawn from side channel information (implicit evidence) e.g. if an examiner sees evidence of outbound network traffic from an actor’s home network to a cloud command and control service for a set of IP ranges every weekend, the examiner may think of it as an action the user performs on a weekend.

However, if the examiner also knows that the actor owns a vacuum bot whose vendor owns the IP ranges, then it may be possible that it is not the user who is doing an activity but rather the vacuum bot which is running on a schedule cleaning the home. Relevance of such information completely changes attribution of events and this is expected to become mainstream.

As technology and investigative tools become more complex and maybe, at least in some ways, less transparent, how can examiners best communicate their findings to management and other stakeholders?

I feel the simplest way is by following the approach “Bottom line up front” a.k.a BLUF. This is similar to TL;DR used in emails these days. This approach gives the benefit that your audience knows (at least in a summarized form) what the issue is, current status, next steps or action items on their end pretty much right away. Cyber intelligence touts this approach to brief executives and government officials as well.

Now to your point about technology becoming less transparent (we can also refer to it as abstracting the complexity away from the user), this is where BLUF shines because parts which were summarized initially can be followed up with more details for the curious reader. This is basically a layered approach to communication where each layer provides more information to understand the entire picture better.

When you’re not working, what do you like to do in your spare time?

I enjoy taking long drives and visiting beaches (being in California is a blessing for these activities). In general I love to travel and am on track to visit all 50 U.S. states.

I also spend time thinking and researching new security topics and ideas. I am into public speaking and have been speaking at conferences, universities and in podcasts. I volunteer to help people 1:1 in their careers by way of mentorship through Linkedin. In the last few years, I have answered career questions from hundreds of professionals with an average of ~50-100 questions per month.