This is the third part of “How to deal with Structured Data”. In case you missed it, the previous part is here.

Picking up from where we left off…

Step 3

Now that we have our formatted text files, there are many free tools available on the internet that can be used to split these files into multiple files with the split occurring at a control string. Just search “text file splitter” in your favourite search engine and you’ll get several options, including a free one actually called “Text File Splitter” which I have used and which works well.

The choice of tool being used will influence what you use as control strings, since the tool may provide options that allow the control to be included or excluded from the output files, and even whether the control line can be used to name the output files.

If the control lines are only used to split the file into pieces, these splitting tools usually sequentially number the pieces. Since the sequence of the content of the formatted file matches the sequence of the records in the spreadsheet, you can use the spreadsheet(s) to generate rename commands if needed to use metadata from the spreadsheets in the file names.

Also, if you have the files split and the control line is the top line of the file and you want it removed, a free utility such as “tail” from unxutils can be used to remove the top line from a file.

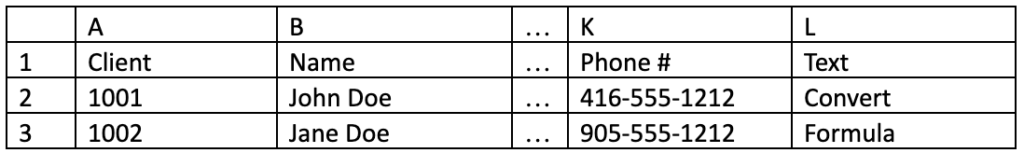

Using our two-table example mentioned in the previous article, the client table might look like this:

Column L has our text conversion formula which creates a text file, after Ultraedit adjustments has a line for each record starting with “Control_” followed by the client number, followed by numerous lines of the balance of the columns B-K. Assuming the text splitting utility splits the lines and places the series of split files in Folder_1, all named “Split_1.txt”, “Split_2.txt”, etc.

I can create formulas in Column M and N as follows (using M2 and N2 as examples):

M2 = “MD Folder_2\”&a2

N2 =”Move Folder_1\Split_”&row(a2)-1&”.txt Folder_2\”&a2&”\Client_”&a2&”.txt”

Running the commands in M and then N will move all the files from Folder_1 into Folder_2 in a subfolder named by the client number for each record.

If the text splitting utility does not remove the control line and you want it out, change N2 above to use the unxutil Tail command as follows:

N2 = “Tail -n +2 Folder_1\Split_”&row(a2)-1&”.txt > Folder_2\”&a2&”\Client_”&a2&”.txt”

If you have a data table where you are combining records like the example in step b), you’ll need to include a column that increments each time the control column changes, and the move command is only generated on the line where that column value is incremented.

Step 4

Going back to our client activity tracking example, at this point I should have text files for the client table named as Client_1.txt, Client_2.txt, etc. and Client_1_20201025.txt, Client_1_202011.25.txt, Client_2_20200101.txt for the interactions table. Just like the above example for the client table, I would recommend using similar commands to also move the associated documents from other tables into the family folder structure with the parent document in the root folder. Depending on the source data structure, you could even create sub folders for each child document and have the children there. Again, if the data structure indicates that child documents may also have child documents, then I would suggest expanding the structure so that the subfolders build a tree structure, so that the immediate parent document of any child is in the folder above.

Depending on the capabilities of the review platform being used, I now have several options for how to load the data into the review platform:

- I can create a load file that loads all of the various text documents as family members to the parent records (the parent records being from the client table in our example). Or perhaps multiple load files are required, one for each spreadsheet – load the parents, then load the children with links back to the parents.

- I can run all the files through the normal processing system to bring them into the review platform, then overlay a family structure within the review platform itself, using the folder structure to create the families.

- I’m sure you can think of other ways.

Regardless of how you get these text documents into the review platform, the spreadsheets can also be used to create load files of metadata values to be associated with the documents, either as part of the load file(s) in the first bullet above, or as a supplementary overlay file for processed files or other load methods. A combination of the bullets above is often required when the source database allows for uploading and export of attached files which will be typically treated as child records to the master records. For example, SalesForce custom applications can have attachment files associated with records.

These “attachments” should be exported as native files and run through the eDiscovery processing tool to extract their metadata (and potentially further attachments, if the attachments themselves were containers like emails or zips), and then use an overlay file to link these files as children to the master record documents that have been generated by the above process.

Bonus Tip: SalesForce custom applications exported using Dataloader.IO from Mulesoft

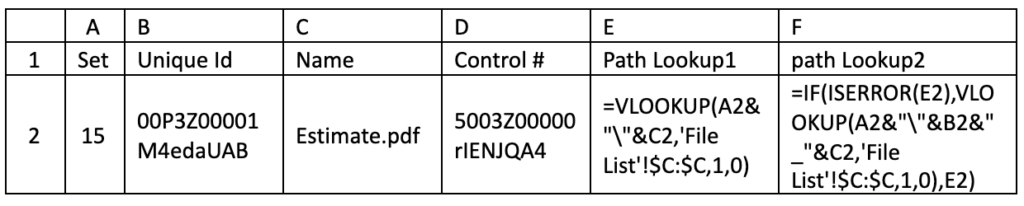

I have some experience with SalesForce attachment documents being exported with Dataloader.IO from Mulesoft. This product exports the attachments as native files into a single folder. There are limitations as to the number of records it can manage at a time, so multiple folders (sets) may be needed. For each attachment export, you can also export a corresponding Listing CSV file that links the attached file, by name, to the SalesForce control record number.

The specific point here, however, is that sometimes there are attachments between records which have the same file name, so when they are exported to the same folder, the application appends a unique control number to the file name as a prefix. Not to worry, as the Listing CSV also contains that control number. Therefore, to match the file name for any formulas, I would recommend that you add a sheet in Excel with the directory listing of all the attachments (dir /s /b /a-d) and lookup against that sheet for the name, and if it fails, then append the unique id number to the name so that you can match each child attachment processed back to the appropriate parent.

This above example is taken from an actual matter where the exports where in different sets and the output folders where numbered by the set. The formulas in columns E and F ensure that the attachment file path shown in column F can be linked back to the parent document which has the control number shown in column D.

In Conclusion

Whether you have followed the exact process outlined above, or designed a workflow of your own, remember to document the process that was followed and to keep all the intermediate work products so that you are able to defend the process in the event that the opposing party in the matter challenges the validity or inclusion of these documents.

About The Author

Harold Burt-Gerrans is Director, eDiscovery and Computer Forensics for Epiq Canada. He has over 15 years’ experience in the industry, mostly with H&A eDiscovery (acquired by Epiq, April 1, 2019).