Fookes Software has published a detailed technical evaluation of AI-powered email classification for forensic and eDiscovery practitioners. The results, published on Forensic Focus, demonstrate that modern AI classification can match or exceed the accuracy of Technology-Assisted Review (TAR)—without requiring training data, seed sets, or any prior AI expertise.

The Familiar Problem

Most email investigations still begin with keyword searches. The method is well understood and widely accepted, but its structural limitation is equally well established: keywords match words, not meaning. A suspect discussing a bribery scheme does not use the word “bribery.” Emails that rely on context, indirect language, or shorthand between participants rarely appear in keyword results, no matter how carefully the search terms are chosen. Research has consistently shown that practitioners overestimate their keyword recall by a wide margin—believing they are finding 75% of relevant material when they are actually averaging closer to 20%.

TAR improves on this, but introduces friction. A subject matter expert must manually classify hundreds or thousands of documents before the system can begin making useful decisions. Each new case requires a fresh training cycle, and the delay can be difficult to justify in fast-moving investigations.

How AI Classification Works Differently

The AI classification approach in Aid4Mail does not learn from examples. Instead, it reads each email and evaluates what it means—much like a human reviewer, but at machine speed. You describe what you are looking for in plain language, define the categories you want the system to assign, and processing begins immediately. There is no training phase. No statistical tuning. No waiting days for results.

For practitioners who have used tools like ChatGPT, the underlying technology will be familiar: Aid4Mail uses the same type of Large Language Models (LLMs), but applies them to structured classification rather than open-ended text generation. That distinction is important, and it directly addresses the most common objection.

“Can AI Be Trusted?”

Skepticism about AI is understandable—over 1,000 cases involving AI-generated fabrications have been documented in legal proceedings globally. But those cases involve generative AI, which produces free-form text and can invent convincing but false content. Classification AI is structurally different. It reads an email and selects a label from a set you define—such as Relevant, Not Relevant, or Inconclusive. It cannot fabricate a date, invent a name, or assert something not present in the source material.

“Classification AI does not generate facts—it selects from categories you define. That structural difference is what makes it defensible in high-stakes review.”

—Eric Fookes, Founder & CEO, Fookes Software Ltd

When evidence is ambiguous, Aid4Mail flags the email as INCONCLUSIVE for human review rather than forcing a decision.

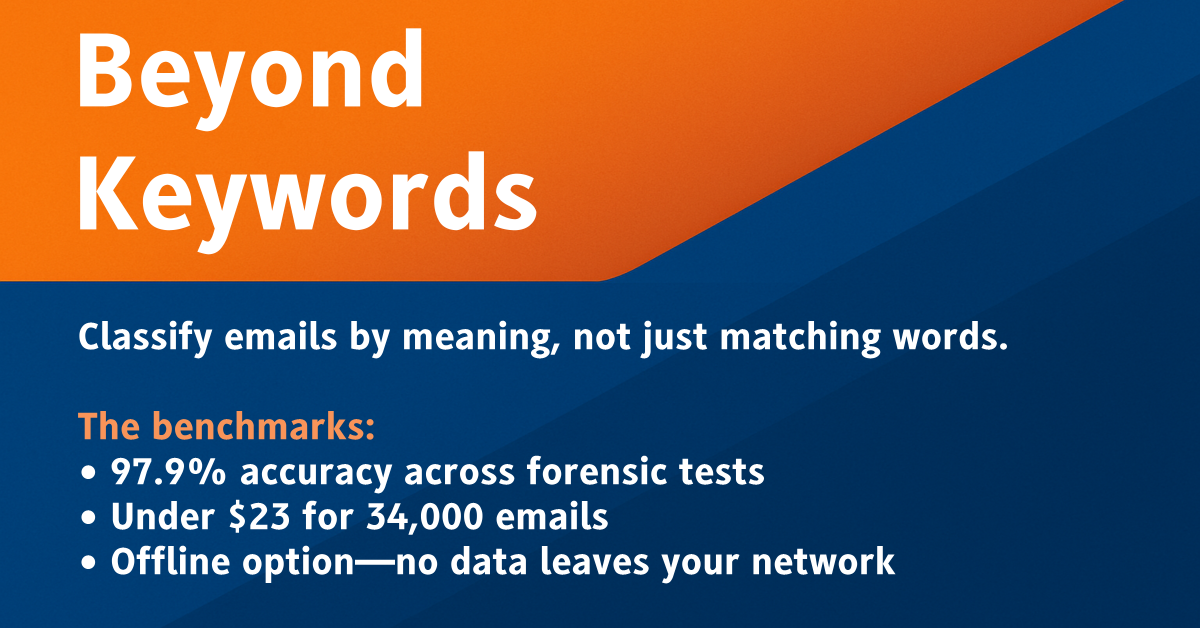

What the Benchmarks Found

Fookes Software tested 18 AI models across four tests spanning over 35,000 emails. The top cloud model achieved 97.9% accuracy across the structured tests. The fastest model projected throughput of over 400,000 emails in a single unattended weekend run. And a full production test—34,097 emails including attachment content—cost under $23 using a high-throughput cloud model.

For organizations that cannot send evidence to the cloud, Aid4Mail also supports fully offline classification using locally installed models. The recommended offline model achieved 93.1% accuracy and, in binary classification, matched the best cloud results—with no internet connection required and no data leaving the local environment. Top models handled emails in English, German, French, and Korean with no meaningful drop in accuracy.

No AI Expertise Required

Aid4Mail ships with over 200 pre-written classification prompts organized by investigative context—covering digital forensics themes (including financial fraud, cybercrime, and insider threats), eDiscovery scenarios (antitrust, IP theft, harassment, and more), and government records categories. You select a prompt, adjust it for your case if needed, and run the classification. No coding, no model training, and no machine learning knowledge required.

Read the Full Technical Analysis

The premium article, Beyond Keywords: AI Classification for Forensic Email Review, provides the complete methodology, model-by-model benchmark results, deployment guidance, and practical starting points. It is available now on Forensic Focus:

A fully functional free trial of Aid4Mail is available at aid4mail.com.