Ralph Palutke discusses his work at DFRWS EU 2018.

Ralph: Yeah. Welcome, everyone, and thank you for the introduction as well as having the opportunity to present our research. As mentioned, my name is Ralph Palutke, and I work as a PhD student for the Security Research Group of the Friedrich Alexander University of Erlangen and Nurnberg, which is led by Prof. Freiling, who is also attending this conference.

As for this talk, I want to propose a novel rootkit technique that is able to counter memory acquisition tools that claim to be even robust against anti-forensics.

Nowadays, malware is still the enabling technology for modern cybercrime, and therefore, we have a great demand for methods to detect, acquire, and analyze such malware. And since modern malware often exists in volatile memory only, memory acquisition has become a vital tool for digital investigations. In this talk, I want to focus solely on software-based methods that run directly on the target system, and lately, we’ve seen that memory forensics has to face two new sophisticated threats, which are, on the one hand, hidden memory rootkits, as well as rootkits that subvert hypervisor technology.

Let me start off with hidden memory rootkits, which require us to introduce the concept of hidden memory before. One has to know that the physical memory is not one continuously accessible address base, but it is instead interleafed with certain areas that are not backed by any accessible RAM. During the early boot sequence, the BIOS creates a memory map, which basically separates available from reserved memory. And while available regions can be used from the OS as well as its processes, reserved regions are typically used for all kinds of devices as well as read-only memory.

The kernel can request this BIOS map by issuing a certain BIOS service interrupt, and it can then allow its PCI devices to map their corresponding MMIO buffers into these reserved regions while simultaneously redefining this layout. The interesting thing about the reserved regions is that PCI devices typically do not claim an entire reserved, which results in small offcuts, which we refer to as hidden memory. The [dangerous] thing about these offcuts is that most acquisition tools simply avoid to acquire these regions.

Back to our hidden memory rootkits – as the name may suppose, these are simply rootkits that are located in hidden memory. They typically hijack control flow via installation of conventional hooks. And since they only install conventional hooks, they are pretty easy to detect, since we can just use conventional strategies in order to detect those hooks, and we can also try to resolve those hooks, which will directly lead us to the hidden memory, and by, for example, enhancing our current acquisition tools, we could use the same access mechanism, like the rootkit does, in order to acquire its contents.

This brings me to the next kind of rootkits, which are hypervisor rootkits, what are basically malicious hypervisors that are able to virtualize a system during its runtime. Therefore, they typically rely on [a process’s] virtualization extensions, like for example, in the Intel world, which is called VT-x. There are some famous examples, like the Blue Pill rootkit, which was developed by Joanna Rutkowska a few years ago and was able to subvert Windows systems, while Vitriol was basically doing the same thing for Mac OS and was developed by Dino Dai Zovi.

Typically, hypervisor rootkits are implemented as kernel drivers, which during execution install a very thin hypervisor, even beneath the operating system, which in turn migrates the running system into a virtual machine on the fly.

So, there’s no reboot required, and the system is not even aware of becoming a virtualized guest. In order to isolate its memory from the guest system, the rootkit typically uses a second address translation, which is provided by, for example, [Intel’] extended page table mechanism. Normally, we would have the translation of a virtual address into a physical address, but with EPT enabled, we now have a second address translation from guest physical addresses to host physical addresses.

For detection of these rootkits, let’s first assume we have a detector software, which is running in kernel mode, and has therefore direct and full access to the physical memory, which of course requires kernel privileges. And of course, you could just only directly access the hypervisor’s code and acquire its contents. With EPT enabled … and I don’t know why all of my graphics are somehow damaged.

But regardless, with EPT-enabled hypervisor rootkits typically set up so-called guard regions. And every time the detector tries to access the rootkit’s code, they’re going to be redirected to the guard region through EPT. So, while the detector has no access to the rootkit itself, it can just directly access a guard’s region. And therefore, we could detect a hypervisor rootkit by simply trying to override the rootkit’s memory, which will be redirected to the guard region, and try to read back the contents directly from the guard, which will lead to some connection between those two areas, so the detector could detect the redirection mechanism. And obviously, more guards would not solve the problem, since we could use the same detection mechanism to detect connections between [guards too], so they would only consume more host memory, and it’s not really feasible. To conclude, hypervisor rootkits are even detectable by in-guest memory acquisition, despite the usage of physical memory virtualization.

So, we decided to try to combine both advantages of hidden memory as well as hardware-based virtualization, and this results in the proof of concept hypervisor rootkit that entirely resides in hidden memory and is able to virtualize running x64 Linux systems.

As a side note, we do not consider a rootkit as inherently malicious, but rather as a neutral tool that can hide both malicious as well as benign software. So, there are different use cases conceivable – of course, the standard rootkit scenario, like integrating malicious payloads, but we could also use it for various stealthy forensic modules, like, for example, a memory dumper. As for the rootkit architecture, it basically consists of three components, which is first an installer component, which is running in user mode, and basically controls the kernel components via the ioctl interface, and we have two kernel models, which is on the one hand the Rootkit LKM (LKM therefore stands for loadable kernel module in the Linux world), which is responsible for setting up the hypervisor as well as virtualizing the target system. And we have a second module called Eraser LKM, which is deleting all remaining traces in the guest’s memory. As for the installation, we start off by simply launching our installer, which obviously requires root privileges, since we will load the kernel modules during the installation.

The first module we load is the Rootkit LKM, and this Rootkit LKM basically has three functions. It first has to migrate [our thin] hypervisor’s code into the hidden memory. It then subsequently installs this thin hypervisor by virtualizing the running system on the fly, and third, it has to isolate the hypervisor’s memory by the usage of Intel’s EPT.

For the system virtualization per se, there are three steps required. We just first check out if the processor meets the required settings to enter VMX operation, which is required to set up hardware-based virtual machines. We then follow up by initializing hypervisor-related data structures, like, for example, the virtual machine control structure. The virtual machine control structure is basically data structure that is used to configure both the hypervisor as well as its guests. And in order to virtualize an already-running system, we need to reflect the system as current execution state in the appropriate guest state fields of the VMCS. So, we, for example, need to [mirror] the current instruction pointer stack as well as segment or control registers.

Furthermore, the VMCS can be used to declare certain events that can be or should be intercepted by the hypervisor during runtime. And finally, we can launch our system into our hardware-based virtual machine.

After the virtualization, we load our second module, which is the Eraser LKM. Like I said, its purpose is mainly to delete remaining traces from the guest, which basically constitutes to the Rootkit LKM’s code and data sections. So, we have to watch out, because there are a few sections that we are not allowed to override until the Rootkit LKM has been unloaded. This is, for example, the .exit.text section, because it contains code that is necessary for actually unloading the module. And of course, any premature erasure would lead to instant kernel [11:49] as soon as we try to unload it.

In order to get rid of all traces, we will simply try to erase those after we have unloaded the Rootkit LKM, but then we have to absolutely assure that these sections are not claimed by other kernel modules in the meantime, as we would obviously crash them otherwise.

We can then unload the Rootkit LKM, which has a few nice benefits, because it automatically deregisters our module from all relevant kernel data structures, as well as removing all of its entries from the file system. The nice thing is that we do not need any direct kernel object manipulations any more. This is all deregistered automatically.

Keep note that the rootkit, despite unloading its module, will stay fully operational, because at this point, we already have installed a thin hypervisor into the hidden memory.

Afterwards, we can unload the second module and instruct our installer to securely wipe all of Styx’s files from the disk, which is done by [overwriting] its content as well as its metadata. So, not even specialized forensic recovery tools would be able to reclaim its contents.

As a result, we have installed a very thin hypervisor beneath the operating system, and our target system is not even aware of being virtualized at this point. As a nice side-effect, we do not have to install a single hook in the system to obtain control, because this can be done by intercepting certain events through Intel VT-x.

I’ve talked a lot about hidden memory, so I think it’s time to shortly introduce our hidden memory manager, which is basically responsible for enumerating all hidden memory ranges in the physical address space as well as providing some basic hidden memory operations, like, for example, allocating or freeing memory as well as supporting basic address translations since we cannot rely on kernel functionality, because our hypervisor is not allowed to map the kernel at all.

Furthermore, we implemented our own translation lookaside buffer, simply to speed up hidden memory-related address translations. As for the hidden memory enumeration itself, we simply assume the entire physical memory as one continuous hidden memory region, and over time, we just redefine this layout by excluding certain memory ranges. And what remains at the end is our hidden memory. And we start off by excluding PCI device ranges, which can be done by manually enumerating the PCI bus and afterwards excluding corresponding MMIO buffers by consulting a device’s base address registers.

This is really the most important stack in the enumeration algorithm, since accessing the MMIO buffers of a device could potentially lead to undefined behavior and ultimately crash the system.

Afterwards, we make use of a kernel symbol, which is called the iomem resource tree. This is basically a layout of physical memory ranges, and for this step, we just exclude all ranges that are not marked as reserved. Afterwards, we’re going to check the remaining regions for any meaningful data, as some of the reserved regions could contain read-only data, like it’s used for a system ROM, and obviously, overwriting this data could cause fatal damage to our system.

As a last step, we need to verify if the remaining regions are indeed accessible and therefore are backed by RAM. We do this by modifying a few bytes in each of the remaining regions pages, and subsequently examine if the page’s contents changed. If so, we [imply] the existence of accessible RAM and just exclude other pages from our layout. In conclusion, in our test system, we were able to find two hidden memory ranges, which amount up to roughly 252 kilobytes, which can then be used by our rootkit.

As I said before, there is some point during the installation progress where we need to migrate the rootkit’s code into the hidden memory, and therefore, I want you to assume that we have a page of the rootkit, for example, in its code section, which we started off by simply copying into hidden memory, and because code pages often contain relative addressing, like jumps or branches or all kinds of stuff, we either had to relocate the page’s code or what we did is just simply map it the same way like it’s done in the best. So, it has the same virtual address by simply mapping it in the same way in our own hypervisor’s page tables.

In order to protect our memory from the guest, we made use of Intel’s extended page table feature, and we basically have to separate read and execute accesses from write accesses here. For the first two, we simply redirect all guest accesses to a specific guard page. This is basically a single page which is allocated in hidden memory, where every access that is pointing to the hidden memory is redirected too. We can do this by simply map all of the hidden memory’s guest physical addresses to the host physical address of the guard page in the corresponding EPT page table entries. And the nice thing about it is that it does not require any interceptions from the hypervisor. All of it is done in hardware by the MMU.

As for write accesses, in order to prevent the guest from overwriting our memory, we simply withdraw write privileges from all appropriate EPT PTEs, which will cause an EPT violation as soon as the guest tries to write to these areas. And EPT violations can simply be intercepted by our hypervisor’s event handler.

Speaking about the event handler, we absolutely try to limit guest interceptions to an absolute minimum, because they are so heavily dependent on the use scenario. But there’s one thing we really had to intercept, and this is of course EPT violations, like I said in the last slide, simply to protect our hidden memory from being overwritten. Therefore, we try to fake inaccessible memory that is not backed by any RAM. And the typical behavior of areas that are not backed by RAM, if you write to these areas, simply nothing is happened. So, our hypervisor simply discards this write.

As for the evaluation, we used a 64-bit Ubuntu Server system as a virtualized KBM guest. This was the predominant service system configuration at the time we started the project. We then evaluated our system with various virtual processes as well as different amount of memory, and one thing we noticed is that the capacity of hidden memory was extremely dependent on the system’s configuration. So, for best results, we simply assigned four gigabytes of RAM to our test system.

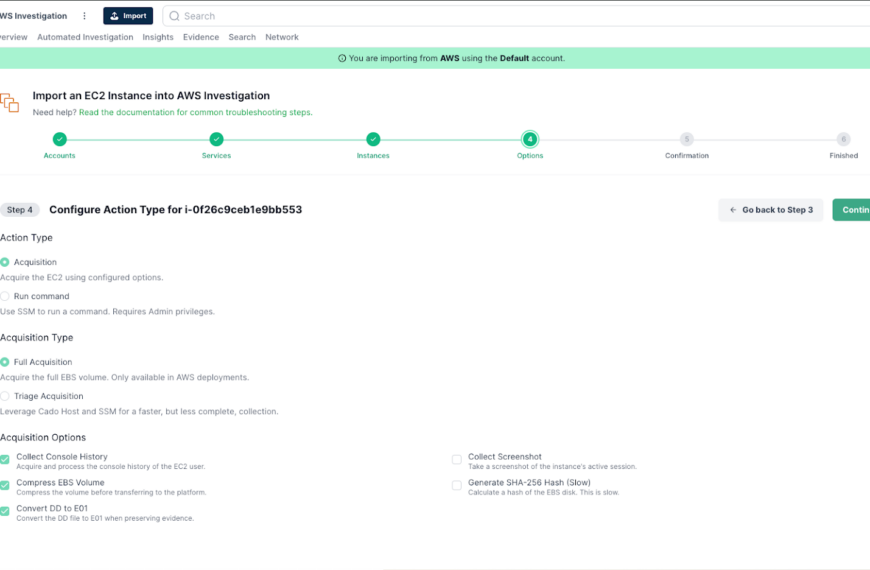

We then evaluated the [stealthiness] of our rootkit, simply by evaluated traces in the guest file system as well as traces in the physical memory, and since we did remove both kernel modules from the guest memory as well as wiping all of our rootkit’s files, there were no traces left in the file system. As for the evaluation of the physical memory of the guest, we first had to enhance the Linux version of the physical memory dump or Pmem, which is part of the famous Rekall framework, in order to be able to even acquire hidden memory.

So, we therefore implemented our same hidden memory enumeration algorithm into the memory dumper, so it was able to acquire this special type of memory. And of course, since our EPT protection mechanism is enabled, there were no indicators that [would lead] to the presence of our rootkit.

So, what did we achieve? We have a few benefits over hidden memory rootkits. First of all, we don’t need to install any hook in the system. All of it can be done through VT-x. And we also have isolated our memory from the guest … yeah. But also, we have benefits over hypervisor rootkits, which was achieved by faking inaccessible memory without any RAM. So, the detection mechanism, I’ve explained in the beginning of this talk, is not working anymore, since we’re intercepting and discarding write accesses as well as redirecting read accesses to the guard page, which contains the default value that would have been read if we just try to read from areas that are not backed by RAM.

In conclusion, only one four-kilobyte guard page suffices to protect our entire rootkit.

There exist some limitations. First and the biggest limitation was the very small amounts of hidden memory we could find. Furthermore, Styx is currently only tested within nested KVM, as we feared to damage our machine during the memory probing phase, because some of the read-only memory areas couldn’t be protected as they should be, and … yeah. So, we tried to prevent that.

There’s no protection against DMA accesses, so any acquisitions software that relies on DMA can simply bypass our memory protection mechanism.

In the future, we want to evaluate further systems, especially regarding the capacity of available hidden memory. We want to test Styx on further system configurations, which includes various kernel versions, but we highly assume that only minor modifications will be required to make it work on the latest kernels. Furthermore, we want to integrate forensic plug-ins, like shown in projects like Hypersleuth or Vis. And we want to implement a DMA protection engine, which basically makes use of Intel’s VT-d feature and configuring an IOMMU, so all DMA accesses can be redirected simply with the same mechanism we used for standard memory.

In conclusion, we implemented a proof of concept rootkit called Styx, which combines hidden memory with hardware-based virtualization features and is able to counter even robust memory acquisition from tools that run within the target system. And thereby, it completely resides in hidden memory, isolates its footprint via EPT, and deletes all of its remaining traces through a second kernel module.

We then evaluated our system by an enhanced version of the Pmem dumper, and of course no indicators were found to reveal the presence of our rootkit.

To conclude, we really highly doubt to see any malicious versions of this type of rootkit to appear in the wild, since they’re so highly dependent on a specific system’s configuration. But however, we want to mention that they are indeed feasible for very targeted attacks.

This brings me to the end of my talk, and I want to thank you for your attention and I’m happy to answer your questions.

[applause]End of transcript