Manoranjan Mohanty: Hello, everyone, and thanks for watching this video. This video is about our paper “Towards Deep Fake Video Detection Using PRNU-Based Method”. This work was done in collaboration with UTS and University of Auckland.

So, deep fake video is not new to us. We know that the number of deep fake videos are increasing every day and we also acknowledge the danger that this deep fake video is bringing to our society. And this is one of this example where it has been shown how deep fake video can be really difficult to detect, and how deep fake video can be used: very natural looking, you know, videos or fake videos.

So, this is a real actor and this particular scene is from this movie, which is one of the movies in the Indiana Jones series. The real actor is Harrison Ford and this movie was, I think, back in 1981, if I remember it properly. Then using deep fake videos, this actor has been replaced by Nicolas Cage.

And as you can see, for a normal eye where we don’t know who was the original actor, it is really difficult to know if Nicolas Cage was not the actor in that movie. So, this is an example where maybe the threat is not there or not visible, but just imagine these videos are pornographic videos. So in that case, deep fake videos can be used to create pornographic videos of anyone.

Deep fake videos can also be used and are also being used to create fake information, mainly in political domain, and so on. So, this is something where research is ongoing, I’m not going to talk more about that, but then of course the question here is how to find out if a particular video is a deep fake video or not.

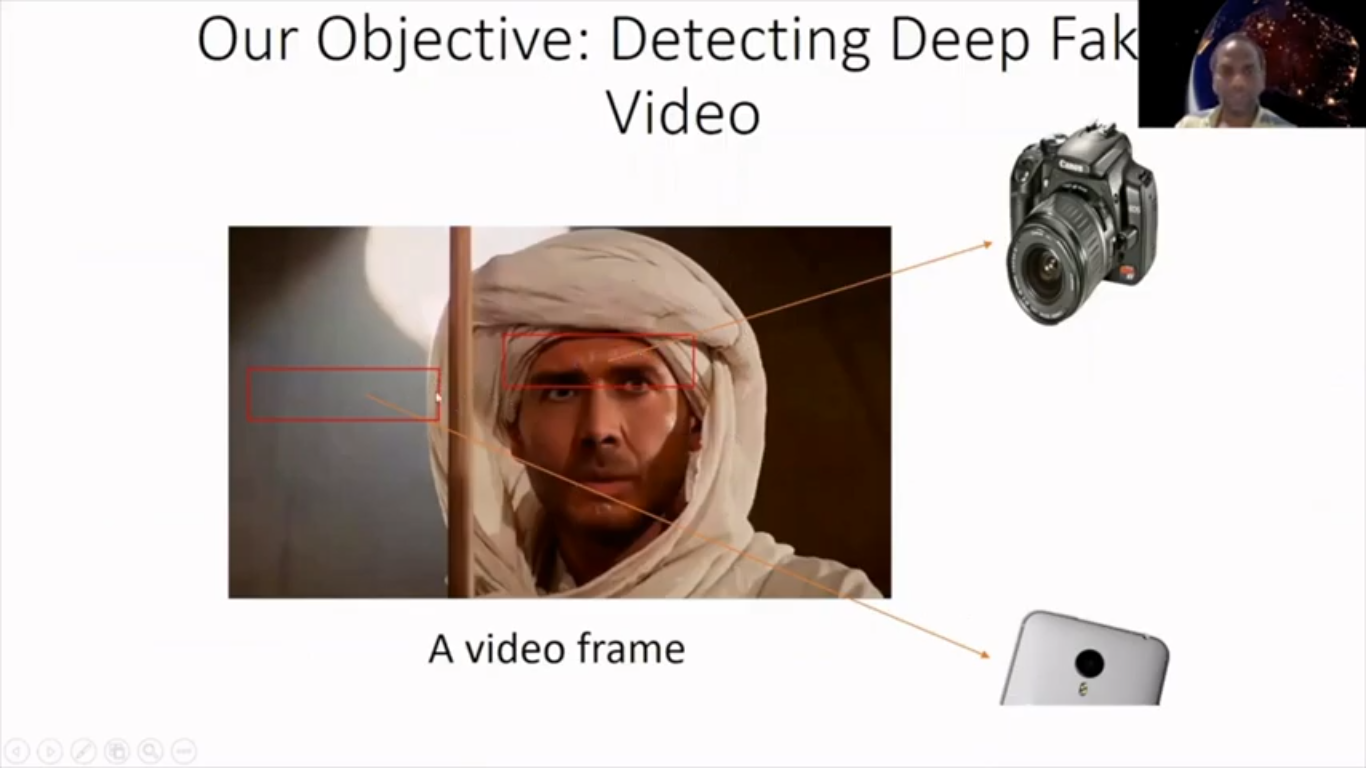

So, that’s a particular question that people are currently working on a lot. And we also basically looked at this problem in this particular paper, and the way we wanted to find out if a particular video is a deep fake video or not is by dividing this in our video into multiple parts. So, here we have shown two different parts, and then finding out if all the parts belongs to a particular camera.

If two different parts of this video is filmed from two different cameras, then we can assume that this video is tampered with, right? So, by the way, this is particularly a frame of the video. So, this was our task then to find out whether a particular part of the video belongs to a particular camera or not. We explored, or we can use, the PRNU-based method.

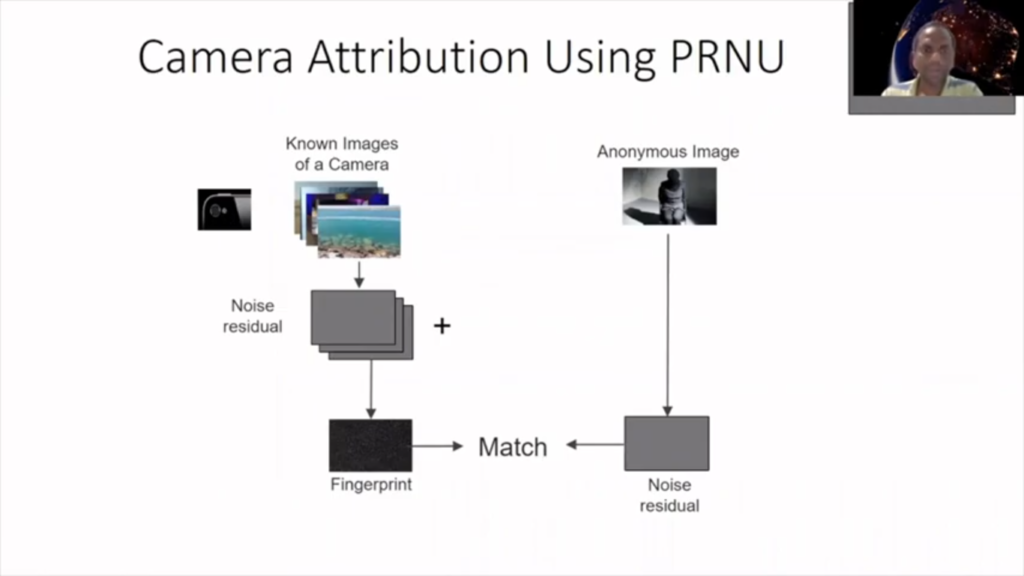

So, the PRNU-based method is a very popular method to find out if a particular image which is anonymous image has been taken by a particular camera or not. Here the experiment is in noise of the camera sensor, and we have to find this noise.

To find that noise, what we need is certain images from this camera, maybe 10 or 20 images, and then we get the noise and then combine those noise, and that becomes a PRNU noise or estimated PRNU noise, because we are doing the estimation here. And if it looks like this estimated PRNU noise is unique to this particular camera, that’s how, that’s why we call that noise a fingerprint. So, this noise is particularly unique to this photograph camera.

So, yeah, so after that we get a noise similarly from an anonymous image, then match the fingerprint, which we can prepare before with the noise of the anonymous image to find out if they match. And if there is a match, then we conclude that this anonymous image has been taken by this particular camera.

Now this is a full image, and PRNU-based method, what’s really good is that’s a different research area that has shown that PRNU-based method is a really reliable method for this kind of problem. And it has also been shown that the part of the image, I mean, it can also be found out if part of the image also has been taken by this particular camera. So, in that case, we simply divide that image and then see whether it’s, the noise from this part of the image is matching to part of the fingerprint, right?

So, based on that, basically, hypothesis, we did certain experiments in a [indecipherable] state. So, the experiment was a limited experiment with a limited data set and in a controlled environment meaning that we could dictate a pure data set. But we acknowledged that for this method to be really successful, it should work for any kind of, reasonably, any other videos which is available on the web, PRNU sophistications and that should [indecipherable] what we’re working on.

And from our limited and controlled experiment, it looks like the PRNU-based method has the full means to find out if a video is….