Session Chair: So the next speaker is Timothy. It’s going to be online, so Timothy, are you ready?

Timothy: Hi, everyone. So I’m Timothy Bollé, I’m a PhD student at the University of Lausanne. And today I will give a brief presentation about some research we are doing with Hannes Spichiger, who is also a PhD student at the University of Lausanne and our supervising professor, Eoghan Casey.

So, the idea of this presentation is really to start a discussion about inferences in digital forensics, and also to discuss the importance of those inferences and how we can try to formalize their representation. So any feedback, any comments are very welcome during the presentation.

And to start, we will go with what we are used to working with. It means digital traces. We will take two scenarios to see if the approach can be applied in a different context. And the first one is pictures on mobile devices.

So here we have two pictures: we have a selfie of the suspect, and we also have a picture of what looks like an airport. The other situation will be fraud investigations in which we found some email addresses. And starting from there, we will try to make some inferences.

So what’s an inference? Basically, it’s just a set of logical processes that we will apply to try to draw conclusions from our traces. The first step we have to do to do inferences is to ask a question. If we don’t have any questions, we don’t have any inferences to make. It’s just there, it’s just extracting traces.

So when we will start to analyze the traces, we will need to have a question to answer. And this question will also guide which type of analysis we will do and what information will be relevant or not.

As we do this analysis, we will also have to take into account limitations that are around all traces; such as procedural errors, accuracy, or any element that will influence the final conclusion.

And the last step that we will have to do, and there may be others, is to try to express some degree of certainty on our final conclusions.

So we analyze our traces, we take into account limitations, and then we have a set of possible conclusions and we will have to express some degree of certainty of how confident we are or some probabilities and so on. And this is really a challenging aspect in today’s digital forensic science field. So let’s go back to our example.

How about the first step we said is to understand the question. So if we are in the situation of having to answer where a person was located at some point, and we have at our disposal traces from the phone, we’ll see that it’s not just the same question, like, answering where the phone was and answering where the person was.

Because basically, if we are interested in the location of the person, we will also have to answer the question: was the person using the phone at that time? So it had some new questions. We will have to do new analysis taking into consolidation other traces to answer a different question.

If we talk about our online frauds, we could be interested in just determining if two cases are in the same series, meaning that it’s the same perpetrator that did both cases, or we could be interested in also just to find similar thoughts that have the same modus operandi in order to have a better understanding of a particular phenomenon.

Because the questions are different, the trace we could use could be different, or the analysis and the interpretation will be different. Here, for instance, if we take the selfie that we found on the phone, we will now start to analyze it.

So we have the face of the person that we can use for identification, and we might also find some GPS information inside the EXIF data, for instance.

So starting the analysis of the traces, we start to construct some possible conclusions. So one could be that a person was at the Ciampino Airport at the given time, and we could have an alternative hypothesis that the person was not there at the time. So this is a basic analysis of traces.

Then we have to take into account the limitations around that. So for instance, for the location of the device here, we have GPS traces, we might ask what was the accuracy around this geolocation, we might also want to ask what level of accuracy we need. That might be, depending on the question we are asking, we might be interested in having just a location at the level of a country or a city or a particular building.

So this will add some uncertainty in our conclusions. Also, for the identification of the person here we have like a clear selfie with the face well lit and there is the full face, but we could imagine some situation where we are in the shadows or the face is partially covered with sunglasses or things like that, which will add also another level of uncertainty in determining if the suspect was using the phone at that time.

And at the end, the idea is to combine all of this uncertainty and all of this evidence to try to draw a possible conclusion of where the suspect was at the given time. And so this aspect of combining uncertainties at different levels to answer a more general question is what Hannes Spichiger is working on, actually.

So here we made basic inferences to try to answer our primary questions. We have another example here with online fraud. So our basic idea was that we can use the strong similarities between email addresses to try to find fraud cases that were done by the same person.

So that’s the situation on the left. We see that we have two names that are not very common in two different cases. They are close, not exactly the same, but in this situation, we are kind of confident that both cases are linked. So we will create a series of crimes based on that.

But what we observed is also that we might find the same level of similarity in other situations, that’s the part on the right, where even if we have the same analytical results, the same similarity score, we were not confident in saying that those two cases were in the same series of crime, because here we have rent4b&b and rentairbnb.

And because they’re common words around Airbnb, because it’s very common we are not confident in saying like, this is the same crime series. And that’s the interesting part about inferences is that even if the analytical result is the same, because we took into consideration other elements, our conclusions are different.

So here we tried with those two examples, just to show you a little bit some inferences we can make from traces. Now we will just have a brief look at how it can be represented in a more formal way than just saying it like I just did.

And in order to do that we use the case of standard formats to represent digital evidence and actions and so on, and we will try to represent an inference. So case objects always have an identifier and here the type is inference.

And then what we will represent is the basis evidence, which means the object that our inference is based on, like, the traces that we are taking into consideration.

We also have the hypothesis, which is a possible conclusion. So we usually will try to have different multiple hypotheses, at least two to avoid bias. And this is one of our conclusions.

Then we will try to describe the method we used to do the inference, and next we have the evaluation type and the evidence evaluation, which are the scale we will use to indicate our degree of certainty.

So here in the example, it’s a probability, so it’ll be a value between a zero and one; but we could also imagine using a confidence scale; so the value could be low, medium, high; or we could have a Boolean scale, we are sure it’s false or either we are sure it’s true.

So this is really to help try to represent the degree of certainty we have in our conclusion, based on the evidence we took into consideration. And finally, we have the evaluation rationale, which will describe what logic did we apply to go from the traces to the conclusions.

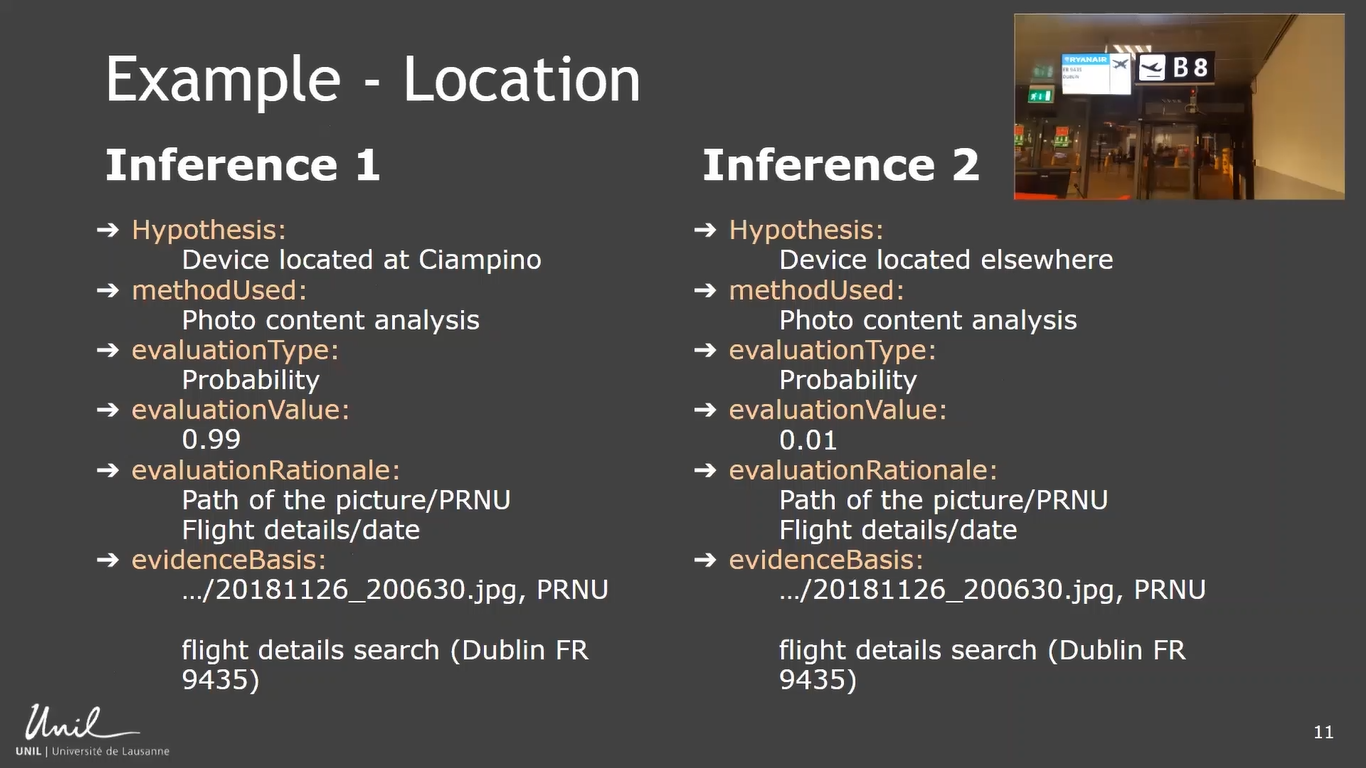

And here is a possible example with our picture on our mobile device. So we had two hypotheses: the device was located at Ciampino Airport, and the device was located somewhere else. And the method we used in both cases was the photo content analysis.

So we looked at the content of the picture and we used here a probability scale to express our certainty in the conclusions. And then the really interesting part, in my opinion, is the evaluation rationale. Here we will explain, okay, we took into consideration to establish our conclusion.

We took, for instance, the path of the picture, to see if it’s the default path of where our picture is stored when you take a picture with a phone. We could also have looked at PRNUs to see if the noise in the picture is the same as the noise generated by the camera. We can look inside the picture, we have some information about some flight details, like a flight to Dublin.

So we could check with the Ciampino Airport if at the time the picture was taken a flight to Dublin was organized. So here we really detail what logic did we follow to generate our conclusions, and the evidence basis will really be the traces that we used, the raw material, let’s say.

And so really, the good part about this representation is that it helps to have some transparency about the inference we made and it helps the reliability, also. We can go back and see what we did and how we reached some conclusions and it can really help also to explain to others what we did.

I’ll be brief on the online fraud. It was just to mention that here in this case, we have the same set of hypotheses, but we followed two different approaches. One just considering the analytical resource, the similarities score; and in this other situation, we take into consideration other elements like the rarity of the strings and so on.

And so here you can see that you can compare two different approaches, two different logics, two different types of inference, and you can compare at the end results. So it also helps to compare logic and understand again how you reach some conclusions.

So here is the take home message. The inferences, we are doing it all the time. It’s what brings added value to digital forensic work. Without that it would be just extracting data. So we are doing inferences to interpret and evaluate traces to answer some questions.

And there are some benefits to represent in a more formal way those inferences and the logic we follow, so that it’s easier to compare and there is more transparency and reliability. Thank you for your time. If you have any questions, let me know.

Session Chair: Okay. So do we have any questions? Yes.

Audience Member 1: Hi, thank you very much. It seems to me, and this is previous experience for me, but actually what you’re talking about is something that is very familiar to Prolog programmers, for those of us that are old enough and unfortunate enough to have had that experience. Is this something that you’ve taken as part of this model, or are you looking at it in any way?

Timothy: Not at all. Maybe I’m too young. I don’t know. The Prolog is not something I’m familiar with and it’s definitely something we can look into to see if it can also feed the case community with other idea of how to represent things.

Audience Member 1: And it might be useful. I mean, it understands the concepts of inference and also probability. So you may find it a useful tool to try out, but thank you for the talk.

Timothy: Thank you.

Session Chair: Other questions? So I have a question. So I agree with the question that was made about Prolog, because the inferences that you are trying to make, they are logic-based, or at least it seems like you are using some kind of logic. And there I wanted to ask you, are you using some type of rule-based inference? How is the inference made?

So in this case, you’re using the geolocation. So the GPS, and then from the picture then you are making an inference of where was this person located. Do you have other cases, or how do you perform this inference? So this is like the logical part. How do you decide from certain types of information to go to conclusion?

Timothy: It really depends on what question you are trying to answer and also what type of data you have. As you said, it might be like basic rule-based to apply some basic rules.

Like, if we know how, for instance, this morning we had a talk about how the timestamps react on a file system based on some operations. So we can use this to help reach our conclusions, but sometimes it’s more complex because you have some rules, you also have the context that helps you.

Like, for instance, with online, for example we could say a basic rule, if the similarity is superior to 0.6, then we have a link. But when you take into consideration the rarity of the strings and the context of your cases, you see that it will really influence your conclusions. And that’s where there is some difficulty to formalize it.

And also where it’s good to start trying to see how it can be described. Even if it’s not like a very formal mathematical set of rules that we can apply saying that, okay, we look at the context, we looked at the rarity of the strings, we looked at the similarity scores and we combined that and we reached this conclusion with this level of certainty. That’s also where we’re indicating our degree of certainty that our conclusion is useful is that you can try to represent to others how you reach that conclusion.

Session Chair: Okay. So there is a question online. This is, “Does there exist a JSON Schema for CASE? And does this support binary object hash embedding?” And I think Eoghan is already answering here.

Timothy: Yeah. So Eoghan is really the master of CASE. But yes, it has a JSON format and it supports a lot of things, not just inference, also traces, actions and so on. And really it’s also a community effort. So if you think that something is missing, don’t hesitate to reach out and propose possible representation.

Session Chair: Other questions? Okay, then. Thank you very much, Tim, for this presentation.