How many times have you said or heard: “I’ll believe it when I see it”? This expression reveals our eyes’ dramatic convincing power: when you see something, you tend to believe it’s true much more easily than when you hear or read about it. In the digital age, for most people, this convincing power seamlessly extended to pictures they see on their computer or smartphone. Unfortunately, we all know how easy it is to forge images nowadays, to the point that seeing is no longer believing.

Fake images can play a crucial role in so many aspects of our life: politics, information, health, insurance, reputation, social media identity, terrorism. Virtually all aspects of our existence are somehow related to images.

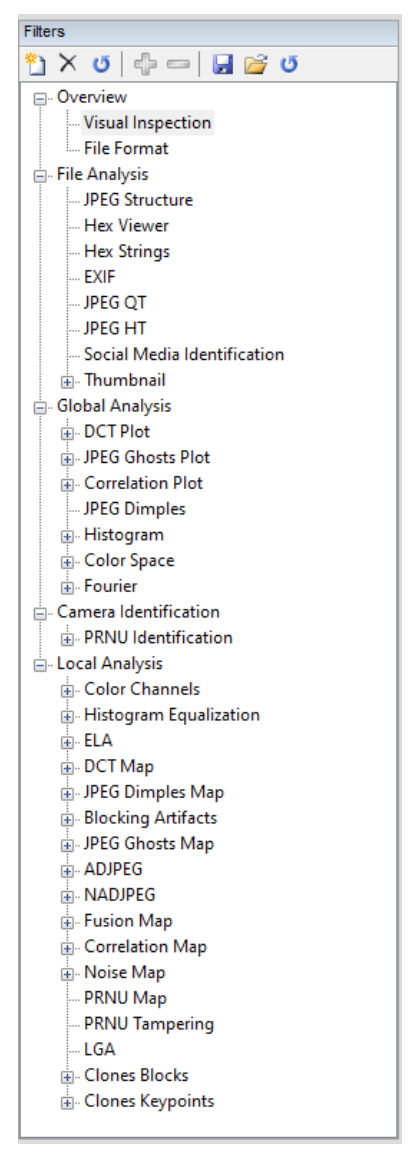

When image authenticity is important for your case, you need the knowledge and the tools to investigate your image’s lifecycle and to reveal possible inconsistencies. Amped Authenticate is the most complete, user-friendly, and documented image forensic suite available, providing more than 40 different tools to work your way from simple visual inspection down to byte-level analysis, metadata inspection, compression analysis, source device identification, and forgery localization. Just look at Amped Authenticate’s Filter panel and you’ll notice how many filters it contains. They are grouped into different categories: Overview, File Analysis, Global Analysis, Camera Identification, and Local Analysis.

Let’s go practical with a case: we are asked to perform image authentication on this digital image, which was submitted by the person shown in the picture to prove he was in a sea town on a specific day in November 2015.

Once we load the evidence image, simply clicking on a filter name will display its result. We may also load a reference image and view the filter result computed on evidence and reference side by side. This is especially useful when you can obtain an original image from the same device model declared by your evidence image’s metadata.

To begin with, the Visual Inspection of the image reveals some strange artifact near to the subject’s eyebrows. Despite it being a bit strange, that kind of artifact is probably not sufficiently compelling on its own to rule out image authenticity.

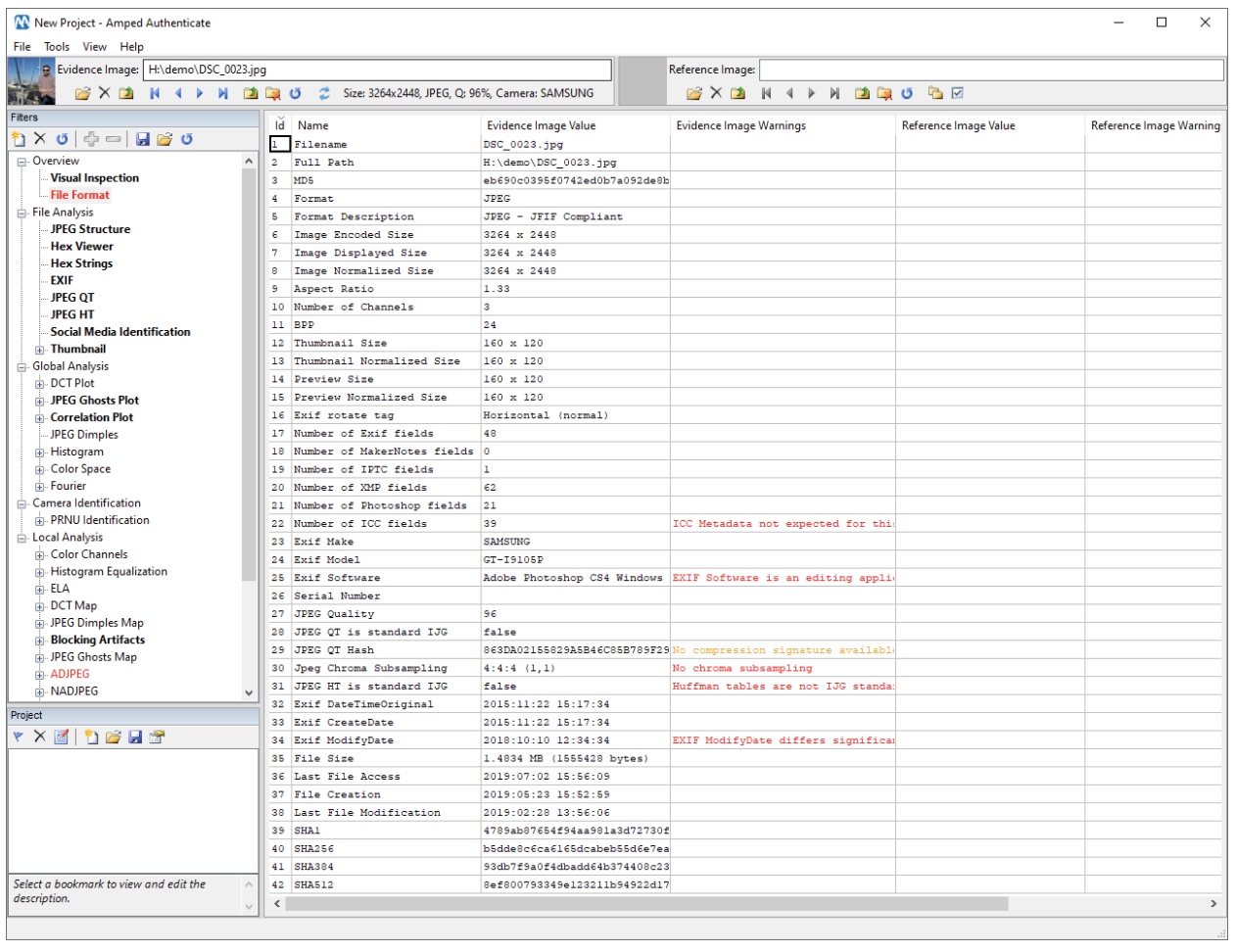

Below you see the File Format filter result for our evidence image: the number of warning messages already suggests that we’re hardly dealing with a camera original image (that is, an image that has never been processed in any way after being captured).

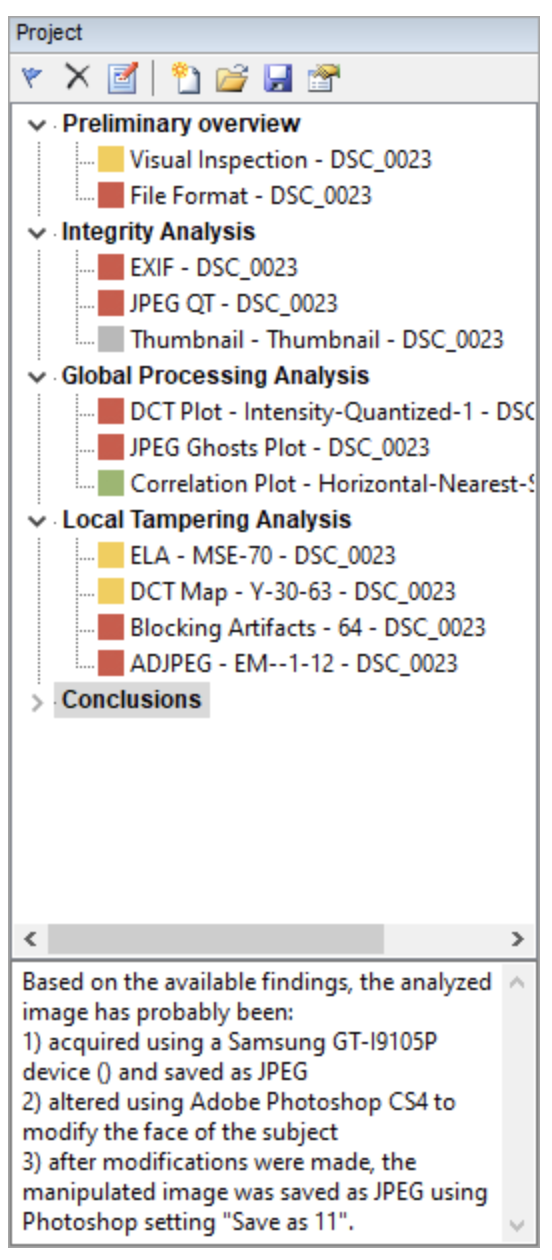

As you may have guessed from the list of filters in the Filters panel, carrying out full image authentication takes some time and needs proper reporting to ensure repeatability without losing your reader in the technical details of what you’ve done. For this reason, Amped Authenticate has recently been empowered with the Projects functionality. This provides an intuitive way for the user to organize their analysis. An Amped Authenticate project is made of a list of bookmarks (defined below), possibly organized into folders. Whenever the user finds an interesting result, they can bookmark it using the Project panel.

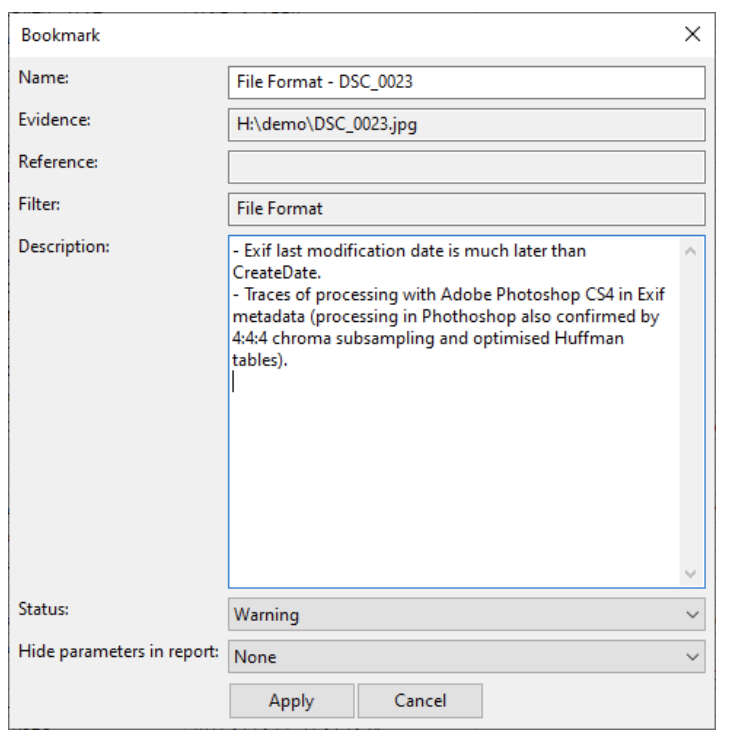

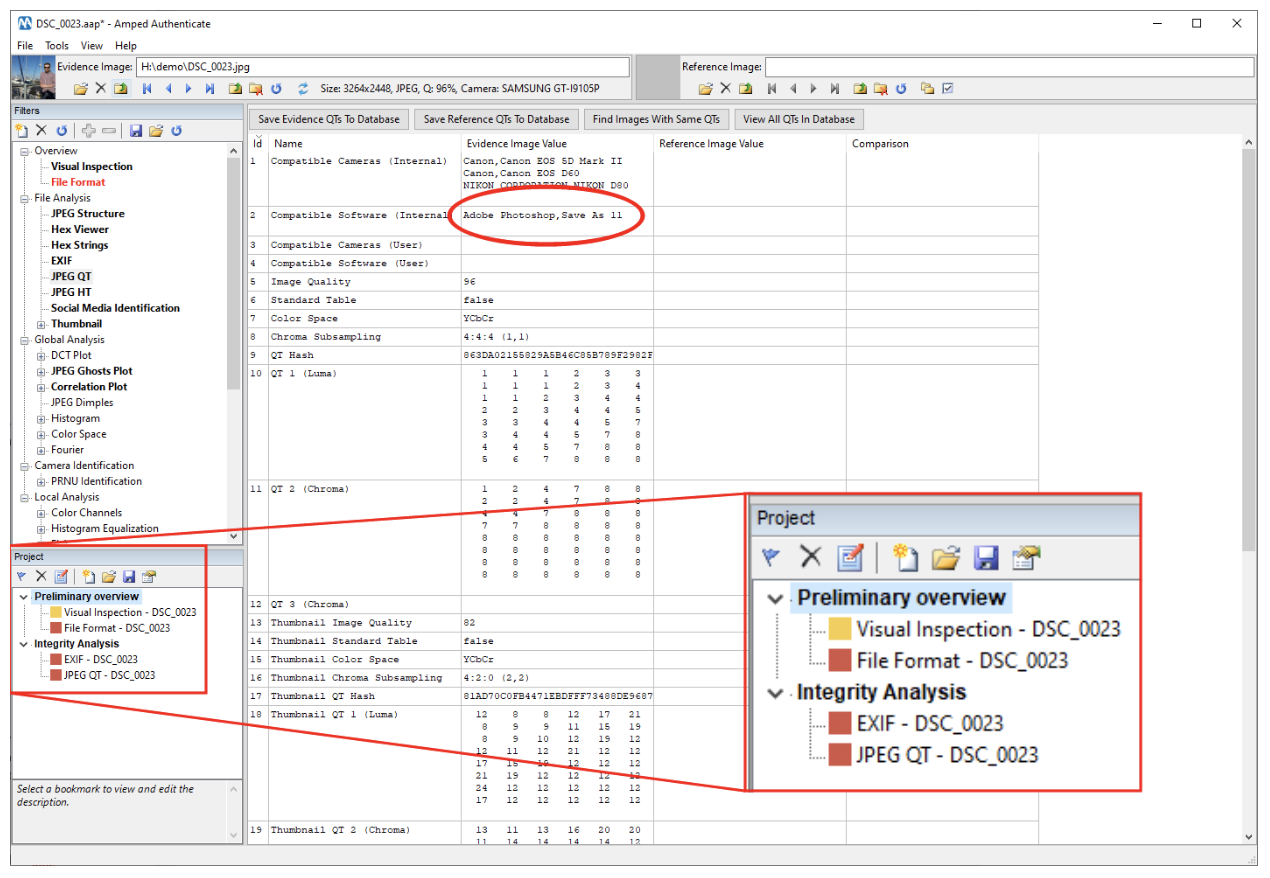

But what’s a bookmark? When a bookmark is added, the currently selected filter (including its input and possible post-processing parameters), together with the path to the currently loaded evidence and reference images, are saved into an entry in the Project panel. By default, the bookmark is named by the active filter and the names of the currently loaded evidence and reference image. Folders can be added by right-clicking on the panel and selecting “Add folder”. For example, in the case mentioned before we may bookmark the File Format filter as shown below: notice that we set the bookmark “Status” to “Warning”, which means it will be highlighted in red in the report.

We should also bookmark the Visual Inspection filter, since it raised some concerns. This time we set the status to “To Check”. Notice the full list of currently created bookmarks and their status is displayed in Authenticate’s Project panel. We decide to create a folder called “Preliminary overview” and move the two bookmarks we’ve just created to this folder, by simply dragging them.

After the preliminary overview, we already have a strong suspicion about image integrity: indeed, elements highlighted by the File Format filter are hardly compatible with a camera original image. Of course, that does not necessarily mean that image authenticity is also compromised! Simply resaving an image using Adobe Photoshop is enough to break integrity, but not enough to break authenticity. We need to investigate further, but first, let’s save the project to file, clicking on the classic “floppy disk” button or hitting CTRL+S on the keyboard. Side note: when we re-load the project, the MD5 of all input files is checked and an error is raised if any inconsistency is found.

Now that our work is saved and safe, let’s continue our analysis. Working with filters in the File Analysis category, our suspicions are confirmed. For example, the JPEG QT filter shows that the image’s main JPEG Quantization Table is indeed compatible with those used by Adobe Photoshop, while the Exif filter confirms traces of Adobe Photoshop in the Software tag and shows that the last Exif ModifyDate is much later than the CreateDate (we won’t show this picture for brevity’s sake). We bookmark both filters and put them in a folder called Integrity Analysis.

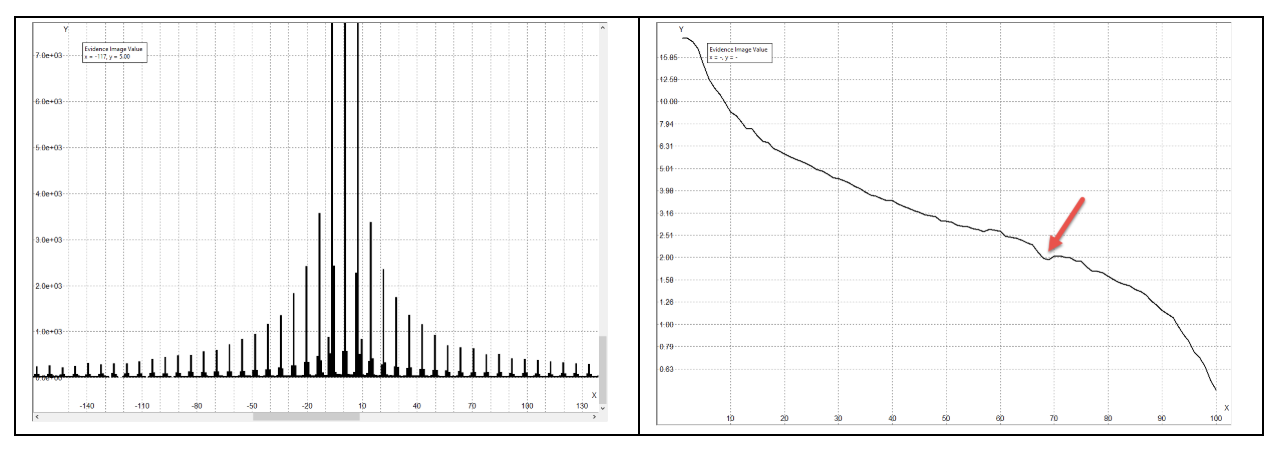

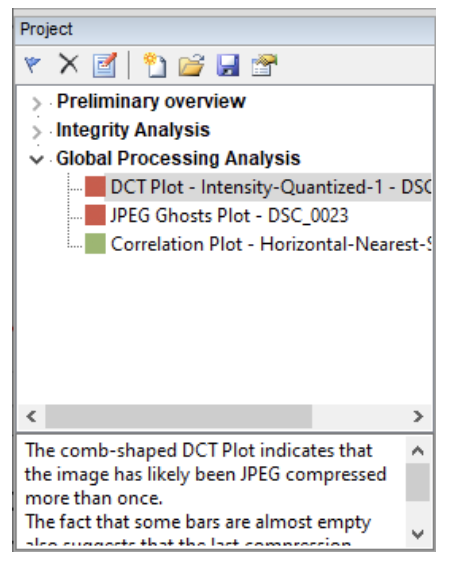

OK, we’ve had enough of metadata and related stuff, it is now time to go to the signal level. We run the JPEG Ghosts Plot, the DCT Plot and the Correlation Plot filters. The first two reveal possible traces of double JPEG compression, which manifest as comb-shaped histograms in the DCT Plot, and as a local minimum at quality 68 in the JPEG Ghosts Plot (the current estimated JPEG image quality is instead 96%, as shown in Authenticate’s top bar).

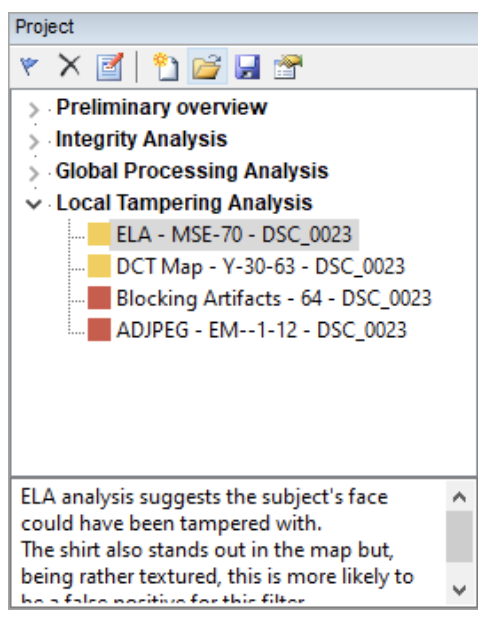

The Correlation Plot, on the other hand, does not reveal any inconsistency. That is how we reported this phase of the analysis in the project (we collapsed the previous folders for better viewability). Notice that when you click on a bookmark, its description will be shown at the bottom for live viewing and editing.

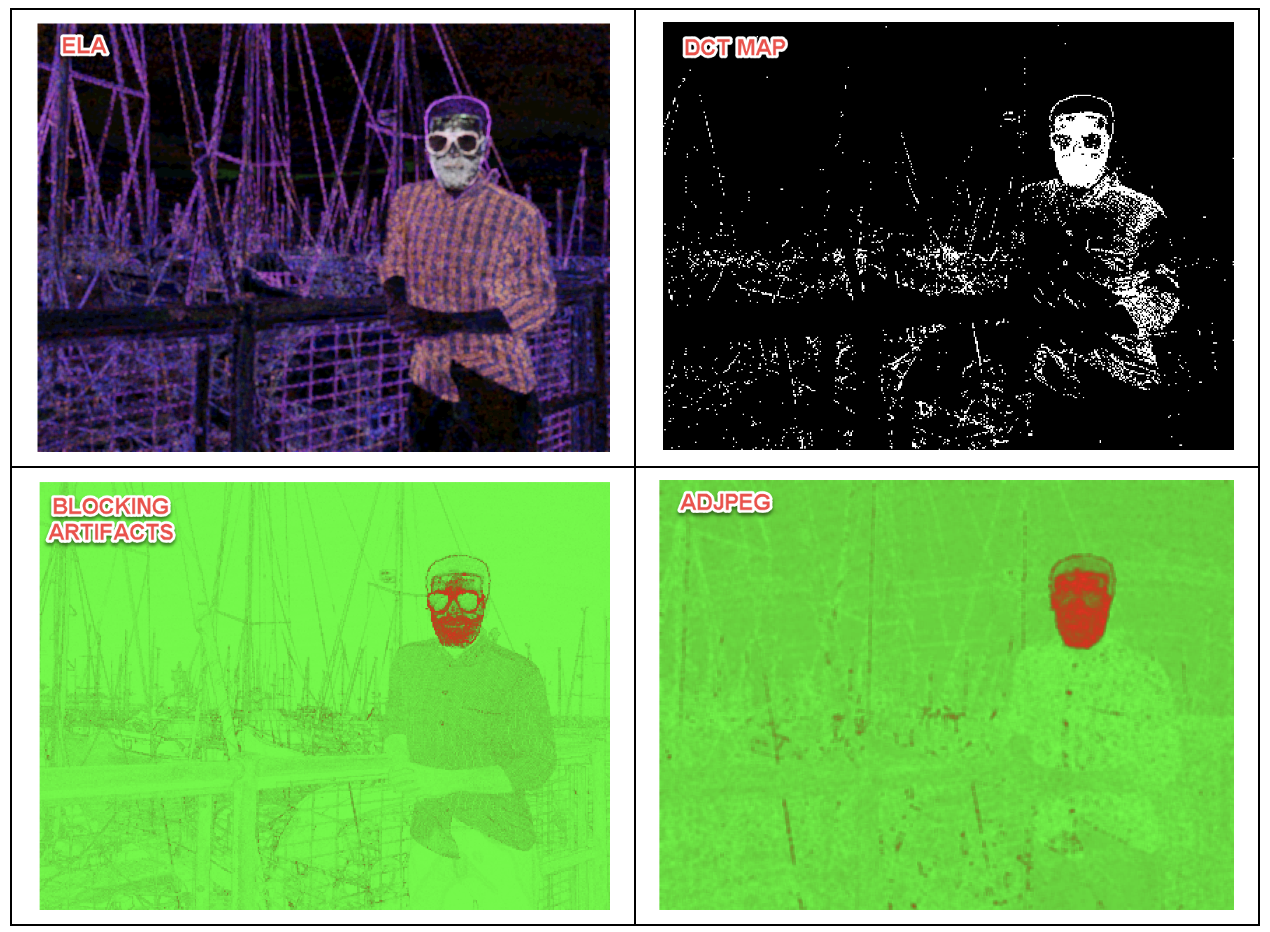

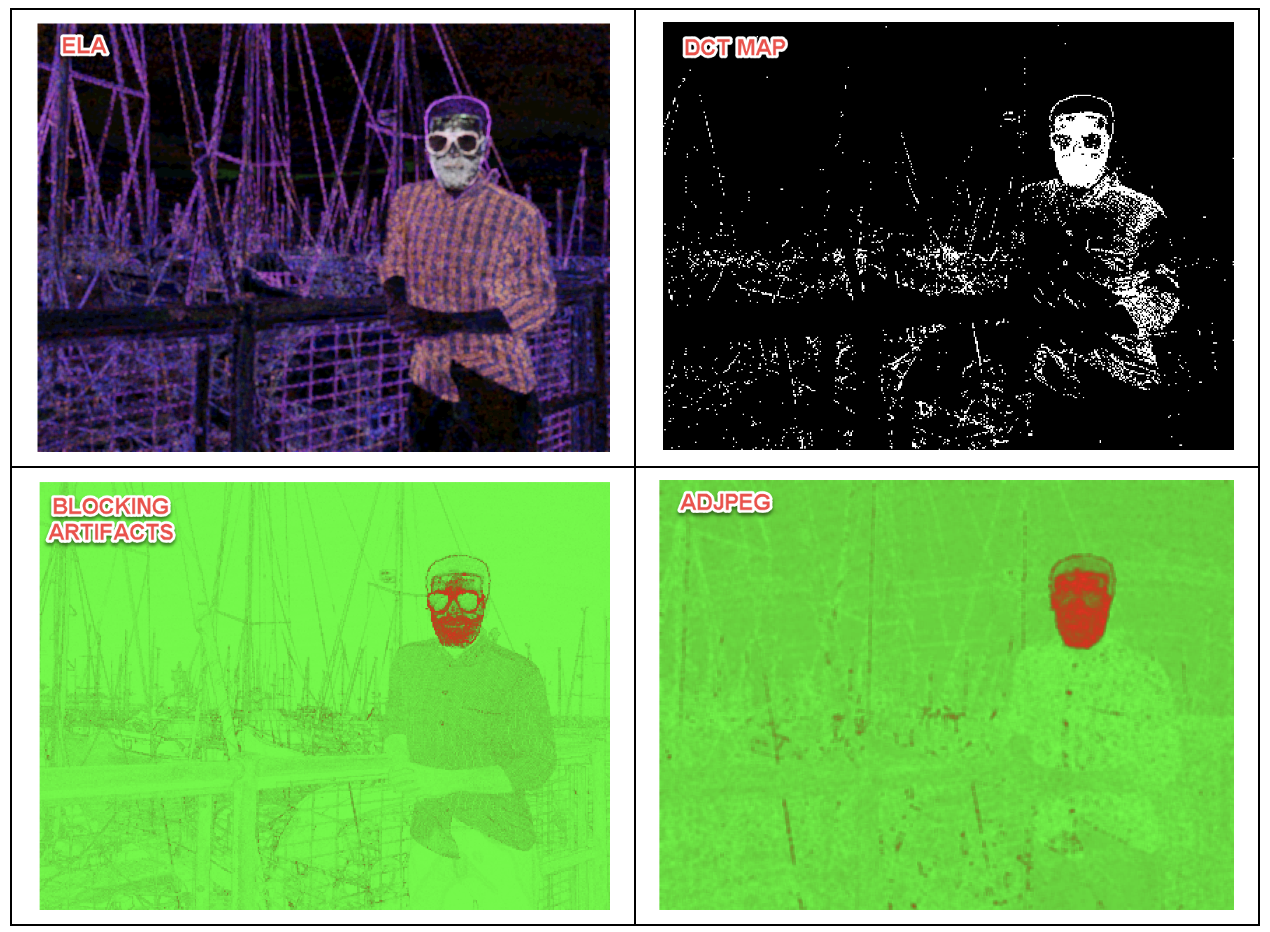

By now, we are quite confident that the image was originally JPEG, it has been processed with Adobe Photoshop and re-saved to JPEG. The last question is: did any tampering occur in the process? To answer this question, we need filters in the Local Analysis category, which produce the so-called forgery localization maps. In this case, two filters (ELA and DCT Map) provide maps where the subject’s face stands out quite evidently compared to the rest, and two other filters (Blocking Artifacts and ADJPEG) provide very compelling maps.

We should bookmark all these results; as shown below, we decided to set the status of two filters to “Warning” and the status of the other two to “To Check”, to reflect the different “degree of confidence” provided by the maps.

Now we are ready to draw some conclusions on the case. We can include them in our Project by simply creating a folder called “Conclusions” and writing our considerations in the description. Empty folders are a good way to add comments to your project that are not related to any specific filter.

This is how our final Project panel looks:

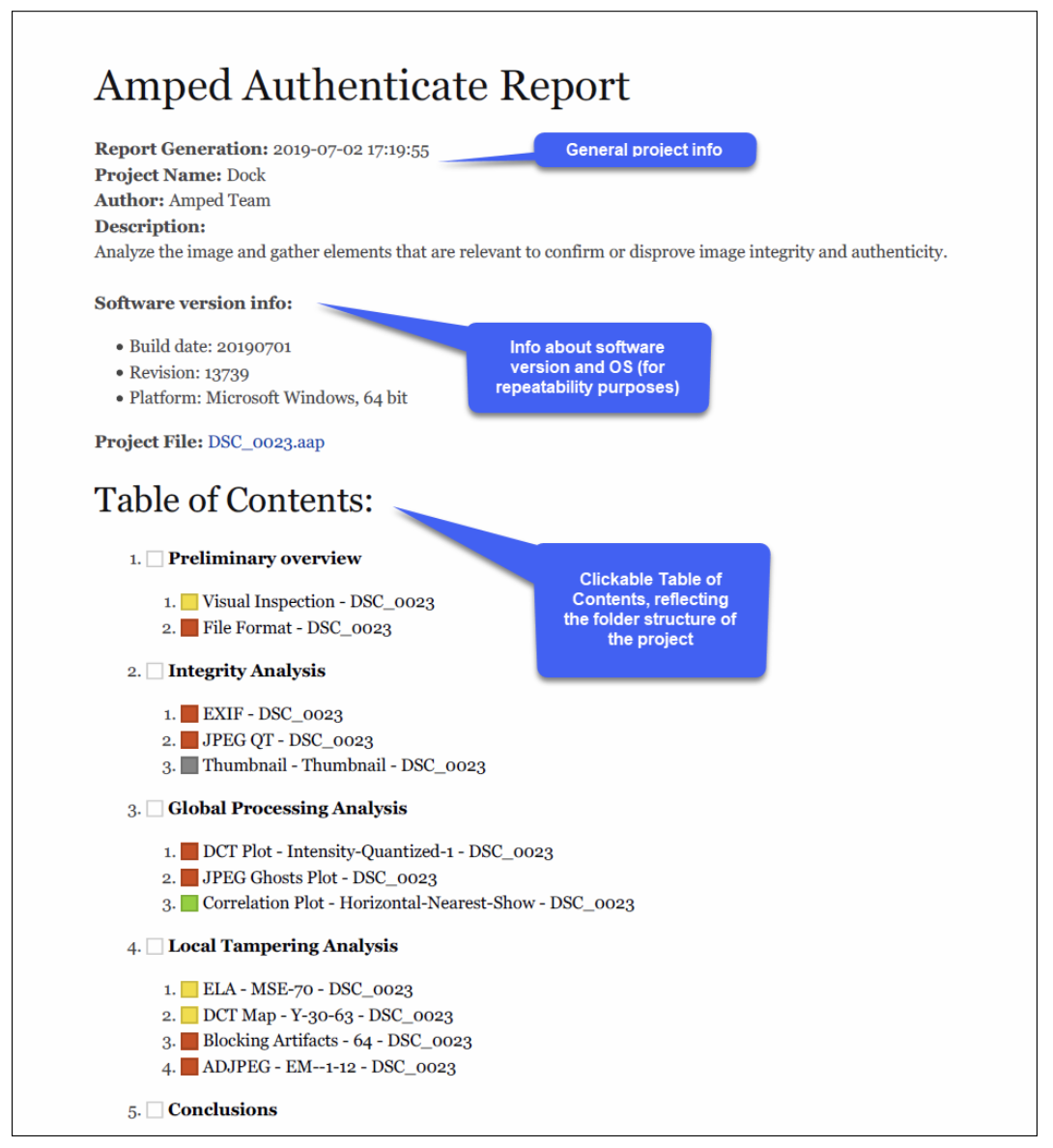

We are now ready to generate a report. We only need to click on Tools -> Generate Report, and choose the output format (which can be PDF, HTML or DOCX). Here, we’ll use some snapshots to show what we obtained when exporting to PDF. Let’s begin with the report’s header, which includes general information about the project (you can set this up by clicking on the rightmost button on top of the Project panel, or with the CTRL+P keyboard shortcut); information about the software version and operating system; and a clickable table of contents which reflects the project structure.

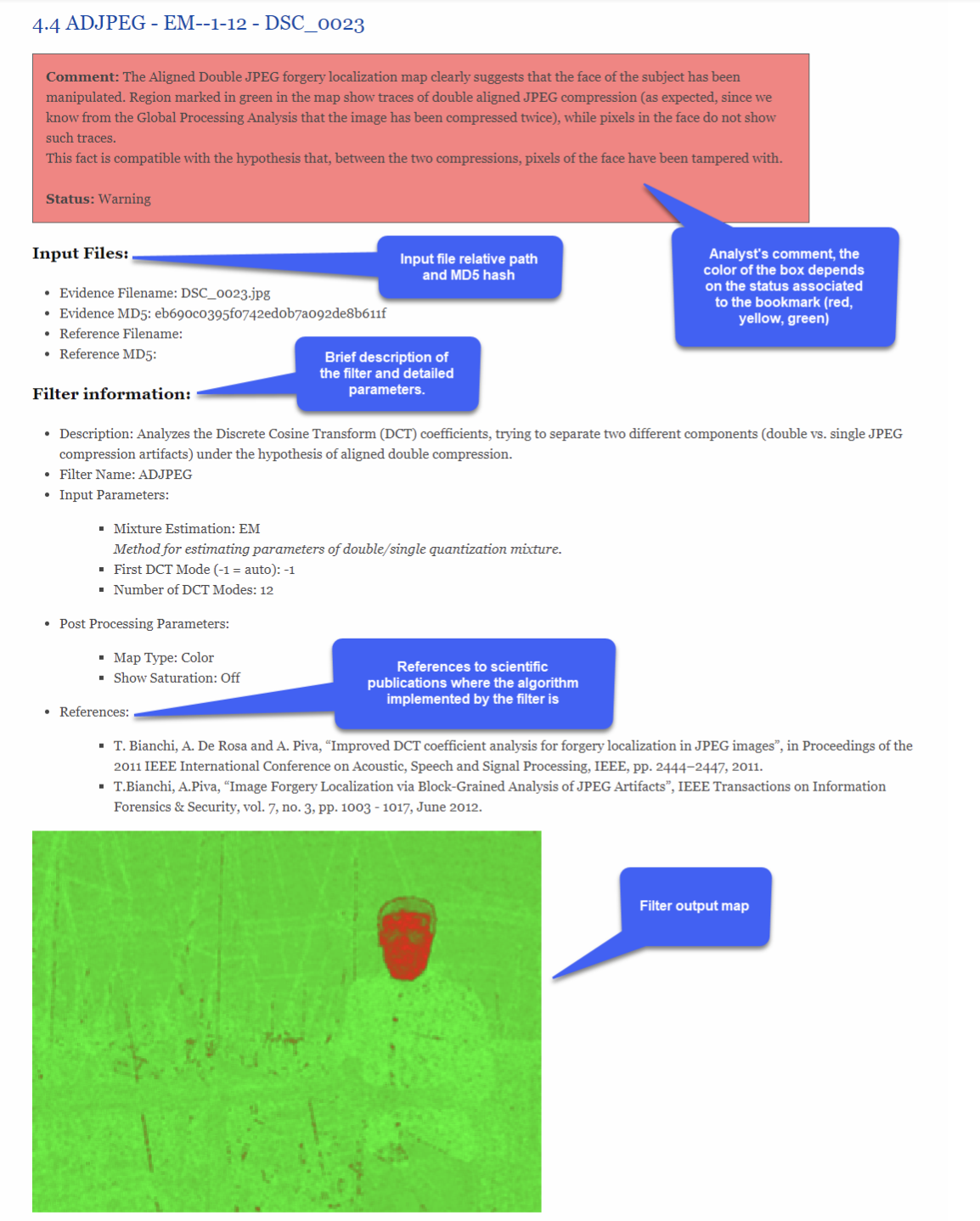

By clicking on a bookmark (or by simply scrolling through the pages of the document) you’ll see each bookmark report; we provide an example below. As you can see, first the analyst’s comment is presented in a box, whose color reflects the bookmark status (red for Warning, yellow for To Check, green for OK, gray if status is not set). The status is also written explicitly in the box (useful if you can’t print in color). Then the evidence and reference files associated to the bookmark are listed, along with their MD5 hash value. After that, information about the bookmarked filter and its settings are provided. To give you a general idea of the degree of precision of Amped Authenticate’s reporting system, the report for the project we created is 18 pages long. Should you need a less verbose version of the report, you can tell Authenticate to not show in the report the Input and/or Post Processing parameters for the bookmarks of your choice.

There we go! We have carried out a complete analysis which highlighted several concerns about the integrity and authenticity of the image, and we generated an effective forensic report presenting our findings.

Keep in mind, however, that Amped Authenticate features many more tools than those we’ve seen in this article. There are many filters we couldn’t see here, and there are tools allowing for an automatic search for images captured from the same camera model on the web (so we can use them as reference for comparing metadata and file properties), or to search for image with similar content through Google Images reverse search, and much more. A really thorough analysis would have involved some of these tools as well.

To learn more about Amped Authenticate visit: https://ampedsoftware.com/authenticate or contact us at info@ampedsoftware.com.